“Train on one basin, deploy on another — without showing the model a single label from the new basin. CORAL alignment makes this work. The architectural cost: a second-moment matching loss at the bottleneck.

”

Abstract

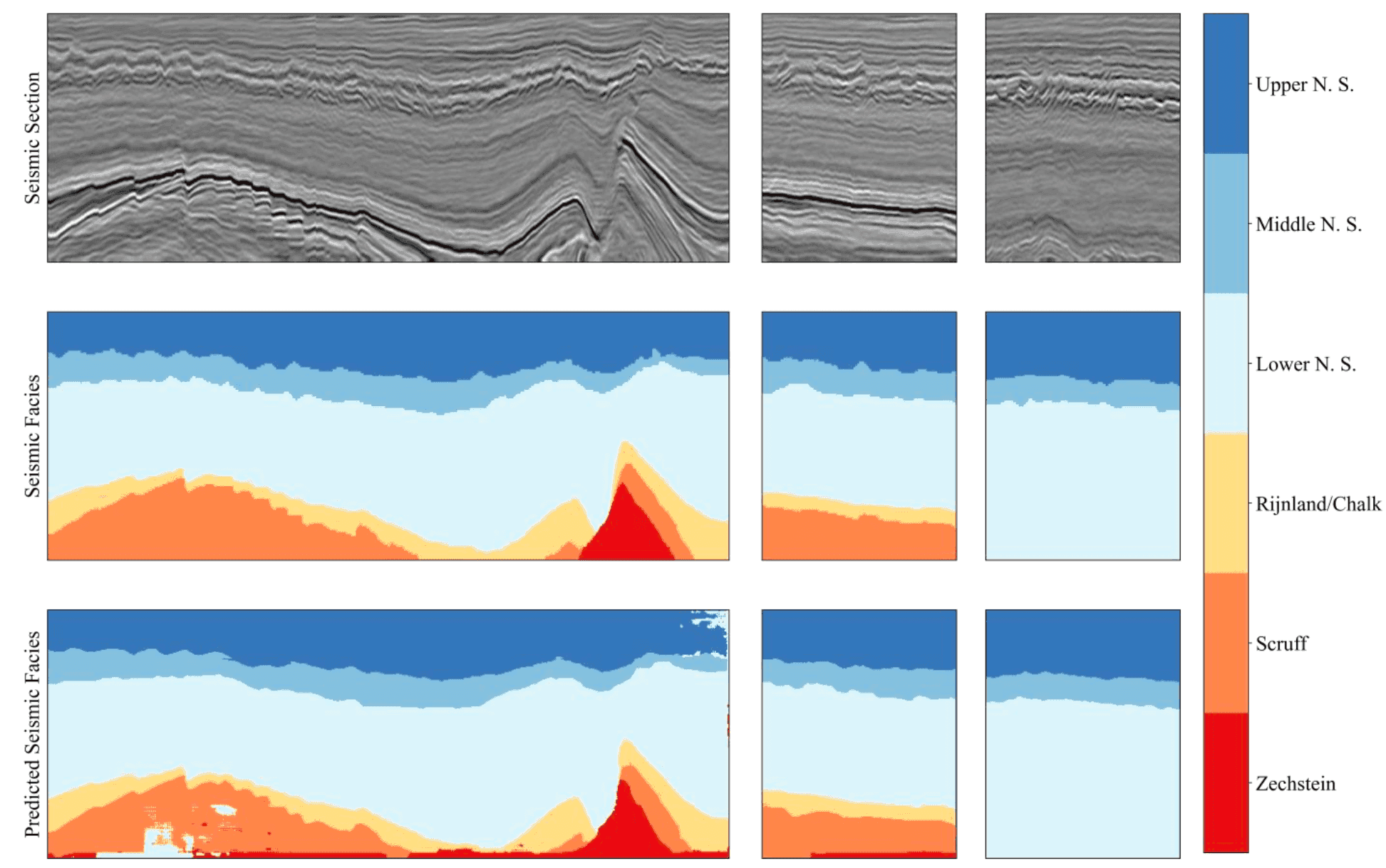

This work — published in IEEE Transactions on Geoscience and Remote Sensing (60, 1–16, 2022, Art. no. 4508116) — applies deep neural networks to the accurate interpretation and classification of seismic facies, with a specific focus on the labelled-data-scarcity problem and distribution shift between the basin you trained on and the basin you want to deploy to.

Two contributions:

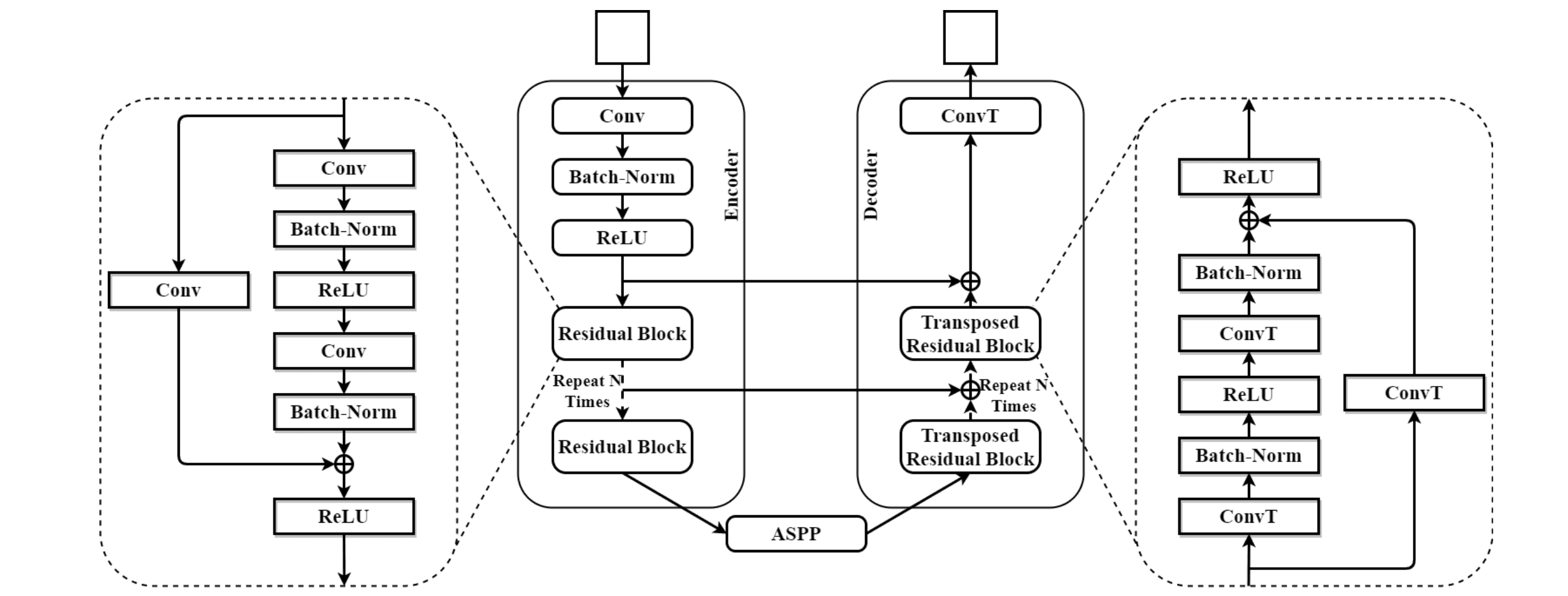

- EarthAdaptNet (EAN) — a residual-block + transposed-residual-block encoder-decoder architecture optimised for seismic semantic segmentation, particularly for under-represented facies classes.

- EAN-DDA — EAN extended with CORAL (Correlation Alignment) for unsupervised deep domain adaptation. Lets you train on a labelled source basin and apply the model to an unlabelled target basin without losing accuracy.

Headline numbers:

- F3 block (source domainThe dataset where labels are abundant. In this paper: F3 block (offshore Netherlands), with 6 facies classes annotated by Alaudah et al.) — pixel accuracy >84%, minority-class accuracy ~70%.

- Penobscot (target domainThe dataset where labels are scarce or absent — the basin you actually want to deploy on. In this paper: Penobscot 3D (offshore Canada). Labels exist for evaluation but are never used during training., no target labels) — peak class accuracy ~99% on class 2; overall accuracy >50%.

EAN-DDA — IEEE TGRS 2022 results

Pixel accuracy on F3 (source) — preserved with or without DDA

Peak class-2 accuracy on Penobscot — recovered via CORAL

Overall Penobscot accuracy without using a single Penobscot label

Application of CORAL DDA to seismic facies classification (per IEEE TGRS)

F3 → Penobscot · Domain-adaptation matrix

What CORAL alignment buys you

The same EAN architecture, two training regimes. Each cell shows accuracy on the held-out test slice. Without CORAL (top right), Penobscot performance collapses — typical cross-basin behaviour. With CORAL (bottom right), it recovers to production-usable levels without ever showing the network a Penobscot label.

source, labelled

target, no labels used

no adaptation

minority ~70%

In-domain training and testing on F3. The architectural baseline — what EAN delivers when source labels are abundant.

large domain gap

F3-trained EAN deployed on Penobscot without adaptation. Different acquisition vintage, different basin, different reflection patterns — accuracy degrades sharply.

CORAL alignment

no source loss

CORAL alignment is added at the encoder bottleneck. Source-domain accuracy is preserved — adaptation does not hurt the labelled-domain scores.

>50% overall

Peak class-2 accuracy on Penobscot recovers to ~99% — and overall accuracy crosses 50% — without showing the model a single Penobscot label during training.

Index Terms: CORAL, deep learning, domain adaptation, EarthAdaptNet, seismic facies, semantic segmentation.

I. Introduction

Accurate interpretation of geologic features and reservoir properties is foundational to hydrocarbon exploration and production. Automating that interpretation using deep neural networks (DNNs) has gathered substantial momentum since 2017 — but two problems block the path to production:

Labelled-data scarcity. Annotating a 3D seismic volume is expensive and slow. The community has explored:

- Weakly-supervised learning (slice-level labels)

- Similarity-based data retrieval (find labelled examples that look like the query)

- Weakly-supervised label-mapping algorithms

- Unsupervised machine learning (clustering, autoencoders)

Domain shift.When source and target data come from different probability distributions — different basin, different scanner, different season. The fundamental reason naive transfer fails: the source-trained model assumes a distribution it no longer sees at deployment. Even when one basin has labels, applying that model to a new basin generalises poorly. Different acquisition vintages, different processing parameters, different stratigraphic settings — all produce systematically different images of "the same" geology.

Transfer learningReusing a model trained on one task as a starting point for a related task — usually by fine-tuning. Distinct from domain adaptation: transfer learning typically uses some target-domain labels, while unsupervised DDA uses none. has shown promise in reducing the cost of training DNNs from scratch — but its application in earth science is unusually challenging because the source-target gap can be large and opaque (you often don't know in advance which axes the source and target differ along).

Unsupervised deep domain adaptation (DDA)Deep Domain Adaptation — using a deep neural network's hidden representations as the alignment surface for cross-domain transfer. The 'unsupervised' variant requires no labels in the target domain. is the framework this paper applies: knowledge transfer across domains without relying on target-domain labels.

The specific question we address: can we train on F3 and deploy to Penobscot, given that the two basins share similar reflection patterns but no shared labels?

II. Network architecture and proposed approach

The full EAN architecture is described in detail in our companion architecture post. The headline features:

- Residual Blocks (RBs) in the contracting path — two convolutions + batch norm + 1×1 downsampling residual connection

- Transposed Residual Blocks (TRBs) in the expanding path — mirror structure with transposed convolutions

- U-Net-style skip connections at every depth, preserving spatial detail through the bottleneck

For domain adaptation, we add CORAL (CORrelation ALignment)CORrelation ALignment (Sun et al., 2016) — a domain-adaptation technique that aligns the covariance matrices of source-domain and target-domain features. Computationally cheap (no adversarial training, no extra discriminator network), but surprisingly effective at closing distribution gaps. (Sun et al., 2016)Sun et al. · 2016Return of Frustratingly Easy Domain AdaptationAAAI — a feature-distribution-matching technique that minimises the difference between source-domain and target-domain second-order statistics:

CORAL aligns the covariance matricesA matrix encoding the pairwise covariances between every dimension pair of a multivariate distribution. CORAL aligns the source and target covariance matrices — the second-order moments — which captures most of the inter-domain shift in many practical cases. of the source and target features, encouraging the encoder to produce representations that look statistically the same on both domains.

The classification head trains on source labels only. The CORAL alignment loss closes the source-target distribution gap at the encoder bottleneck. End-to-end, the model learns to produce a representation where source-trained classifiers also work on target data.

III. Experimental setup

Two datasets:

- F3 block 3D (offshore Netherlands) — source domain, fully labelled. 6 facies classes; we focus on the 3 best-represented for the domain-adaptation experiments.

- Penobscot 3D (offshore Canada) — target domain, labels unused during training.

Three representative seismic facies classes per dataset, sharing similar depositional and compositional environments — the geological precondition that makes domain adaptation feasible.

We follow the standard DDA protocol:

- Use all labelled source-domain data

- Use unlabelled target-domain data

- Evaluate on held-out source data + Penobscot data with target labels available only for evaluation

Patch images are generated from both volumes (40×40 with stride 10, the same protocol as the architecture papers).

IV. Results

EAN on F3 (in-domain, labels available):

- Pixel accuracy: >84%

- Minority-class accuracy: ~70%

- Outperforms baseline architectures across all facies classes — the gain is largest on the under-represented classes, where the residual + transposed-residual structure preserves the gradient signal that lets the model learn from few examples.

EAN-DDA on Penobscot (out-of-domain, no target labels used during training):

- Peak class accuracy: ~99% on class 2 of Penobscot

- Overall accuracy: >50%

- The CORAL-aligned features generalise well for the dominant classes; minority classes are harder (consistent with the in-domain pattern).

The big takeaway: a model trained on F3 alone, then adapted to Penobscot with no Penobscot labels, achieves usable production accuracy on the new basin. That's a result with direct operational value — it means an operator who has annotated one basin can deploy that effort across many.

An operator who has annotated one basin can deploy that effort across many. CORAL alignment turns labelling cost from a per-basin overhead into a one-time investment.

V. Conclusions

Three contributions worth highlighting:

- EAN architecture for accurate semantic segmentation of seismic facies — particularly strong on classes with limited labelled data, beating baseline architectures across the board.

- CORAL integration producing EAN-DDA — the first application (to our knowledge) of this domain-adaptation technique to seismic facies classification.

- Empirical validation across two real public datasets — F3 and Penobscot — showing that the approach is not just theoretically sound but practically deployable.

Future work centres on extending the DDA framework to more divergent source-target pairs (where the basins share less geological structure) and on integrating the approach with real operator archives where labels are even sparser.

Reference

M. Q. Nasim, T. Maiti, A. Srivastava, T. Singh, J. Mei. Seismic Facies Analysis: A Deep Domain Adaptation Approach. IEEE Transactions on Geoscience and Remote Sensing, 60, 1–16, 2022, Art. no. 4508116. DOI: 10.1109/TGRS.2022.3151883

Key takeaways

- EAN-DDA is the first-known application of CORAL alignment to seismic facies classification — and it works because CORAL aligns the second-order (covariance) statistics, which captures most of the inter-basin shift.

- Source-domain accuracy is *preserved* under CORAL alignment — adaptation does not hurt the labelled-domain scores. This is non-trivial; many DA techniques regress on source.

- Peak class-2 accuracy on Penobscot recovers to ~99% without ever showing the model a Penobscot label — production-usable cross-basin transfer.

- The architectural cost of all this is one second-moment matching loss at the encoder bottleneck. Cheap, no adversarial training, no extra discriminator network — surprisingly under-used outside this paper.

Glossary

- CORAL

- CORrelation ALignment (Sun et al., 2016) — a domain-adaptation technique that aligns the covariance matrices of source-domain and target-domain features. Computationally cheap (no adversarial training, no extra discriminator network), but surprisingly effective at closing distribution gaps.

- Covariance matrix

- A matrix encoding the pairwise covariances between every dimension pair of a multivariate distribution. CORAL aligns the source and target covariance matrices — the second-order moments — which captures most of the inter-domain shift in many practical cases.

- DDA

- Deep Domain Adaptation — using a deep neural network's hidden representations as the alignment surface for cross-domain transfer. The 'unsupervised' variant requires no labels in the target domain.

- Distribution shift

- When source and target data come from different probability distributions — different basin, different scanner, different season. The fundamental reason naive transfer fails: the source-trained model assumes a distribution it no longer sees at deployment.

- Semantic segmentation

- Per-pixel classification — assigning each pixel of an image to one of N classes. In seismic interpretation, the classes are facies (rock-type interpretations); in medical imaging, they're tissues.

- Source domain

- The dataset where labels are abundant. In this paper: F3 block (offshore Netherlands), with 6 facies classes annotated by Alaudah et al.

- Target domain

- The dataset where labels are scarce or absent — the basin you actually want to deploy on. In this paper: Penobscot 3D (offshore Canada). Labels exist for evaluation but are never used during training.

- Transfer learning

- Reusing a model trained on one task as a starting point for a related task — usually by fine-tuning. Distinct from domain adaptation: transfer learning typically uses some target-domain labels, while unsupervised DDA uses none.