Blog

Technology in the Oil and Gas Industry: An MLOps Perspective

This study was published in HackerNoon and gives an overview and importance of MLOps application in the Oil and Gas Industry

- legacy-import

- blog

This study was published in HackerNoon and gives an overview and importance of MLOps application in the Oil and Gas Industry. The Oil and gas industry generates an annual revenue that was approximately $3.3 trillion in 2019 and is one of the largest enterprises in the world. Oil and natural gas upstream, midstream and downstream processes constantly generate large amounts of data and are immensely dependent on sophisticated technologies to reveal new insights in the business i.e prevent equipment malfunctioning and improve operational efficiency…

In recent times, the industry has become a trend-setter in technology and is moving towards automation… and hence dependence on artificial intelligence (AI).

Big players like Royal Dutch Shell continuously look for new ways to keep up with the growing demand for dwindling resources. Shell’s software development team stays up to date and enhanced with new features. Shell software revolution is seen in Collaborating in the cloud, in “Software as a service’’, or SaaS solutions, in faster-moving environments and gaining more competition via DevOps.

So the question is what next?

How can the industry keep up with the O&G industry and stay abreast with developing technologies with a mission into the future.

Solution: A Robust and scalable MLOps pipeline.

MLOps: The future of Machine Learning

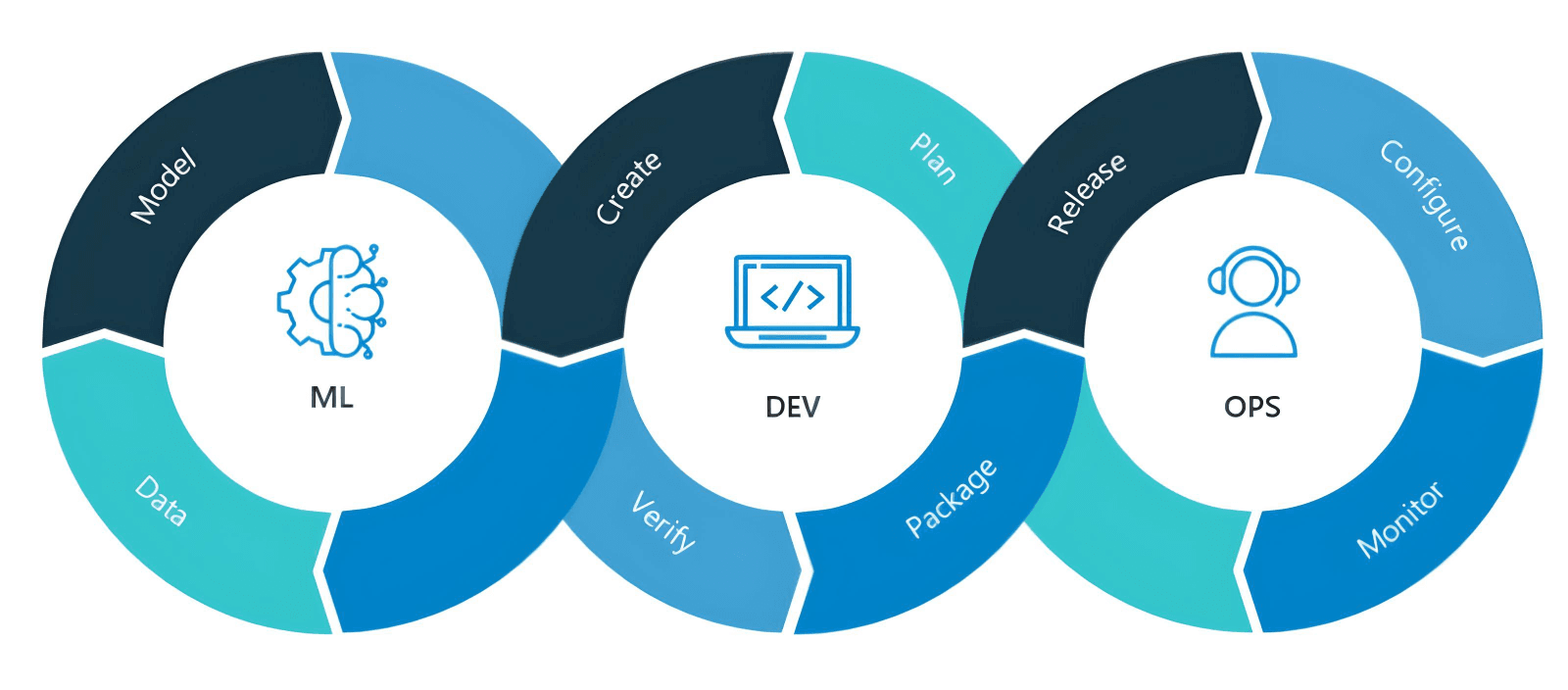

MLOps is the standardization and streamlining of machine learning lifecycle management. It refers to the concept of automating the lifecycle of machine learning models from data preparation and model building to production deployment and maintenance.

There are three key reasons that managing machine learning life cycles at scale is challenging:

- Data is constantly changing, but business needs shift as well.

- Results need to be continually relayed back to the business to ensure that the model in production and on production data aligns

- Machine learning life cycle involves people from the business, data science, and IT teams, none of these groups are using the same tools or same fundamental skills to serve as a baseline of communication.

MLOps pulls heavily from the concept of DevOps, which streamlines the practice of software changes and updates. They both center around Robust automation and trust between teams.

The MLOps pipeline can be broadly divided into four tasks:

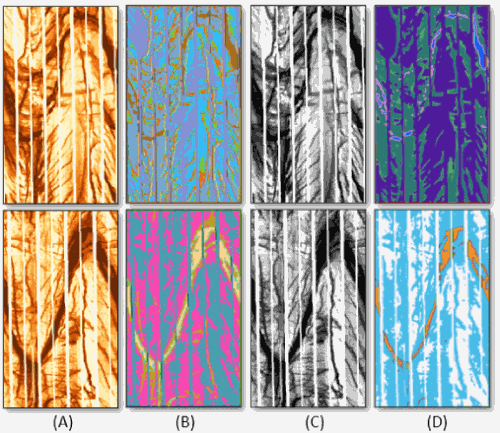

- Data Collection: Seismic sections are noisy semistructured dataset of seismic events that are collected from a specific region. The time-section data are in segy format. Detailed ETL pipelines are used to process data from the segy format to numpy arrays. A nice article on ETL processing can be found here

- Data Aggregation: This step combines data engineering and data science knowledge, with the goal of assuring the quality control, security, and integrity of the data.

- Model Train : Deep learning is an increasingly popular subset of machine learning. Deep learning models are built using neural networks.

- Deployment: Once the model is trained you need to evaluate the results. It’s important to understand what happens when a model gets deployed. Once deployed you access the model through a RESTFUL API.